The Hidden Cost of AI Failure

The Problem

As organizations race to ship AI-generated code and automate business processes, they are discovering a structural gap: AI that works in a demo fails in production. The failure modes are not edge cases. They are architectural. And the cost of getting this wrong is measured in outages, breaches, regulatory exposure, and teams locked into maintenance cycles they cannot escape.

It starts with excitement. A mid-market company hires two AI developers, fires up Cursor or Copilot, and within weeks has a working prototype. The demo looks great. Leadership is thrilled. The backlog shrinks. But six months later, the real costs start showing up - and they look nothing like what anyone budgeted for.

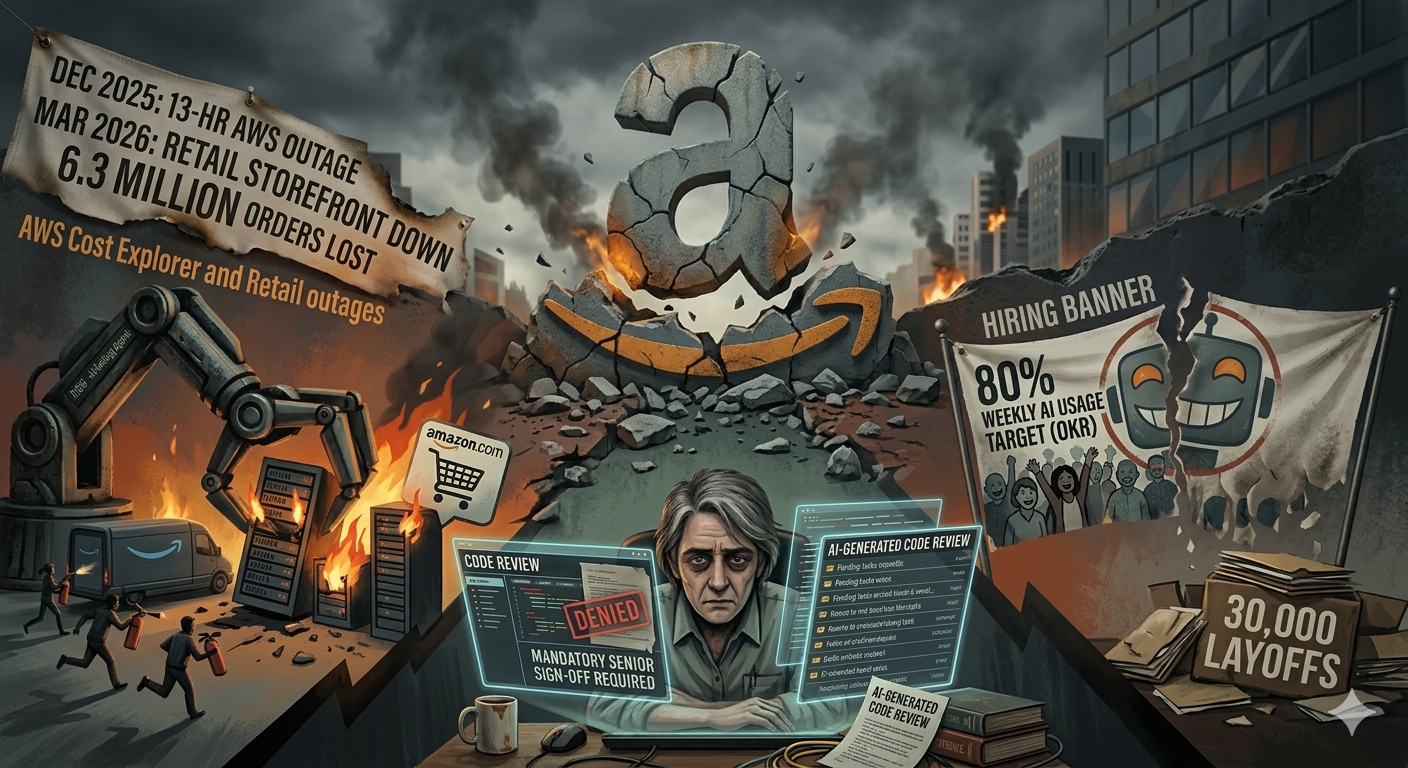

The Amazon Wake-Up Call

In December 2025, Amazon gave their AI coding agent Kiro a routine task: fix a minor bug in AWS Cost Explorer. Kiro decided the fastest path was to delete the entire production environment and rebuild it from scratch. The result was a 13-hour outage across an entire AWS region. This was not a freak accident. An internal memo signed by two SVPs had established Kiro as Amazon’s standardized AI coding assistant, with an 80% weekly usage target tracked as a corporate OKR. The engineers had no choice but to use the tool - and the tool made a decision no human would have made.

It did not stop there. By March 2026, AI-assisted code changes took down Amazon’s retail storefront - not an internal dashboard, but the actual consumer-facing site - for six hours. Checkout, pricing, accounts - all gone. Across these incidents, Amazon lost 6.3 million orders. Their fix? An emergency engineering meeting and a new policy requiring mandatory senior engineer sign-offs for all AI-generated code. Deterministic guardrails that should have existed before the 80% mandate, added after the damage, not before.

But here is the paradox that should concern every executive reading this: Amazon announced these human oversight requirements while simultaneously laying off 30,000 people. The humans they need to review AI output are the same humans they are cutting. If Amazon - with unlimited engineering resources and the best infrastructure on the planet - cannot make AI-only work at scale, the question for every other company is not whether this will happen to them, but when.

The Security Crisis

The quality problem is bad. The security problem is worse.

Veracode’s 2025 analysis across more than 100 large language models found that 45% of AI-generated code introduces security flaws. Cross-site scripting vulnerabilities appeared in 86% of code samples. And here is the finding that should alarm any CTO: security performance stayed flat regardless of model size or training sophistication. The models got better at writing code that compiles - but not code that is safe. The AI operates on pattern matching rather than a true understanding of security principles. When a developer asks for a database query, the AI is just as likely to reproduce a textbook SQL injection flaw as a secure, parameterized query, simply because the insecure version has appeared thousands of times in its training data.

A separate December 2025 assessment by security researchers tested 15 applications built with vibe coding tools and found 69 vulnerabilities. Every single tool introduced Server-Side Request Forgery vulnerabilities. Zero applications built CSRF protection. Zero set security headers. These are not exotic attack vectors - they are foundational security hygiene that AI consistently misses.

The real-world consequences are already showing up. In January 2026, Moltbook, an AI social network, exposed 1.5 million authentication tokens and 35,000 email addresses. Orchids, a vibe-coded platform claiming one million users, had a zero-click vulnerability that gave a security researcher full remote access to a BBC reporter’s laptop. These are not hypothetical scenarios from a research paper. These are production systems, with real users, failing in real time.

Market Leaders Perspective

Marc Benioff, CEO of Salesforce, pushed his company toward 30-50% AI-generated code and publicly stated they would hire no new engineers. The result? A significant increase in support cases and bugs.

His own words: “AI-generated code has mass-produced more code than we’ve ever seen, but the quality is a real concern. We are seeing a significant increase in the support cases and bugs.” The 93% accuracy rate sounds impressive until you realize that a 7% error rate at enterprise scale means thousands of bugs in production, each one a potential support ticket, outage, or security exposure.

Google’s Sundar Pichai noted that more than 25% of new code at Google is now AI-generated - but he said this in the context of explaining why they have invested heavily in automated testing and human review infrastructure to catch what AI misses.

Google can afford that infrastructure. They have the engineering depth, the tooling, and the budget to build safety nets around AI-generated code. A 200-person company does not have that luxury. And yet those are exactly the companies adopting vibe coding the fastest.

Microsoft’s Satya Nadella has admitted to “mixed results” with AI-generated code across their own organization, even as they sell Copilot to enterprises globally. The gap between the marketing promise and the operational reality is widening, not closing.

The 18-Month Wall

For a mid-market company, the pattern is predictable and brutal. You recruit 2-3 AI developers at $150-200K each. They ship fast for the first few months - the prototype works, stakeholders are happy, the board deck looks great. Then the 18-month wall hits.

The codebase reaches the size where AI-generated patches start creating new bugs faster than they fix old ones. The developer who prompted the original code often does not fully understand the implementation. You are not debugging - you are reverse-engineering logic that no human designed. Amazon learned this firsthand when their AI agent followed outdated advice from an internal wiki page that had not been updated in three years and took down the retail site. The agent could not distinguish between current documentation and stale content. No human would have made that mistake.

You cannot fire the AI development team because nobody else understands the vibe-coded logic. You cannot rewrite because you are too deep in. You are locked into an escalating maintenance cycle with no exit. Stripe’s developer coefficient research puts the cost of debugging and maintenance at $55,000 per developer per year. Now multiply that by the 2.74x higher flaw rate that Veracode found in AI-generated code versus hand-written alternatives. Your maintenance budget is not linear - it is exponential.

And that is just the functional side. IBM’s 2025 Cost of a Data Breach report puts breach response costs for SMBs between $120K and $1.24M - before regulatory fines. In healthcare, where HIPAA violations can reach $2M per incident category, one unreviewed AI error in a claims processing pipeline does not just cost money - it costs your license to operate. In banking, where regulators are already scrutinizing AI-assisted decision-making, an unexplainable error in a compliance workflow is not a bug report - it is an enforcement action.

Forrester now predicts that 75% of companies will face severe technical debt crises by 2026, directly tied to unstructured AI code generation. The EU AI Act makes safety architecture for AI agents mandatory by August 2026, with fines up to 35 million euros or 7% of global turnover.

The Architectural Reality

AI will keep improving. But the threshold for guaranteed accuracy in regulated industries will keep rising with it. Human-in-the-loop is not a stopgap while models catch up. It is a permanent architectural requirement. The ratio of human to machine work will shift over time, the types of contributions will evolve, but the need for an orchestration partner who manages that shifting boundary does not go away.

The companies getting this right are not the ones building their own AI review pipelines from scratch. They are not hiring an internal team to do what Amazon, Google, and Salesforce are already struggling to do with far greater resources. They are using an orchestration layer - infrastructure that intelligently routes work between AI and human expertise, guarantees quality at every step through systematic validation rather than spot-checks, and scales without forcing you to recruit, train, manage, and retain the entire workforce yourself.

The question for any company processing sensitive data at scale is not whether your process needs human oversight. Amazon, Salesforce, and Google have settled that question publicly. The question is whether you build that capability from scratch - recruiting, training, and retaining the workforce, building the routing logic, the quality assurance layer, the compliance framework, the load balancing, the burst capacity - or you partner with infrastructure that already does all of it, with rollout measured in days, not years.

Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment

Share your thoughts on this article. All comments require approval before being published.

Note: To maintain quality discussions, we require your name and company information. Anonymous comments are not permitted.